"connectionString": "jdbc:snowflake://./?user=&password=&db=&warehouse=&role=" If not specified, it uses the default Azure integration runtime. You can use the Azure integration runtime or a self-hosted integration runtime (if your data store is located in a private network). The integration runtime that is used to connect to the data store. The specified role should be an existing role that has already been assigned to the specified user. Role: The default access control role to use in the Snowflake session. It should be an existing warehouse for which the specified role has privileges. Warehouse: The virtual warehouse to use once connected. It should be an existing database for which the specified role has privileges. Database: The default database to use once connected. User name: The login name of the user for the connection.

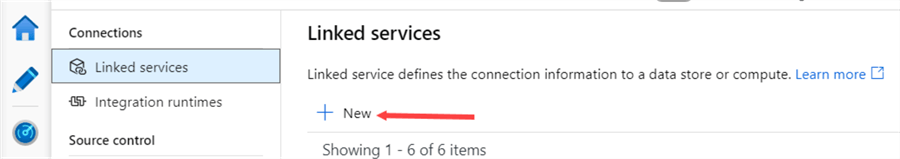

Account name: The full account name of your Snowflake account (including additional segments that identify the region and cloud platform), e.g. Refer to the examples below the table, and the Store credentials in Azure Key Vault article, for more details. You can choose to put password or entire connection string in Azure Key Vault. Specifies the information needed to connect to the Snowflake instance. The type property must be set to Snowflake. The following properties are supported for a Snowflake linked service when using Basic authentication. See the corresponding sections for details. This Snowflake connector supports the following authentication types. The following sections provide details about properties that define entities specific to a Snowflake connector. Search for Snowflake and select the Snowflake connector.Ĭonfigure the service details, test the connection, and create the new linked service. Use the following steps to create a linked service to Snowflake in the Azure portal UI.īrowse to the Manage tab in your Azure Data Factory or Synapse workspace and select Linked Services, then click New: To perform the Copy activity with a pipeline, you can use one of the following tools or SDKs:Ĭreate a linked service to Snowflake using UI Specifies whether to require using a named external stage that references a storage integration object as cloud credentials when loading data from or unloading data to a private cloud storage location.įor more information about the network security mechanisms and options supported by Data Factory, see Data access strategies. REQUIRE_STORAGE_INTEGRATION_FOR_STAGE_OPERATION Specifies whether to require a storage integration object as cloud credentials when creating a named external stage (using CREATE STAGE) to access a private cloud storage location. REQUIRE_STORAGE_INTEGRATION_FOR_STAGE_CREATION The following Account properties values must be set Property In addition, it should also have CREATE STAGE on the schema to be able to create the External stage with SAS URI. The Snowflake account that is used for Source or Sink should have the necessary USAGE access on the database and read/write access on schema and the tables/views under it. If the access is restricted to IPs that are approved in the firewall rules, you can add Azure Integration Runtime IPs to the allowed list. If your data store is a managed cloud data service, you can use the Azure Integration Runtime. Make sure to add the IP addresses that the self-hosted integration runtime uses to the allowed list. If your data store is located inside an on-premises network, an Azure virtual network, or Amazon Virtual Private Cloud, you need to configure a self-hosted integration runtime to connect to it. If a proxy is required to connect to Snowflake from a self-hosted Integration Runtime, you must configure the environment variables for HTTP_PROXY and HTTPS_PROXY on the Integration Runtime host.Copy data to Snowflake that takes advantage of Snowflake's COPY into command to achieve the best performance.Copy data from Snowflake that utilizes Snowflake's COPY into command to achieve the best performance.① Azure integration runtime ② Self-hosted integration runtimeįor the Copy activity, this Snowflake connector supports the following functions: This Snowflake connector is supported for the following capabilities: Supported capabilities For more information, see the introductory article for Data Factory or Azure Synapse Analytics. This article outlines how to use the Copy activity in Azure Data Factory and Azure Synapse pipelines to copy data from and to Snowflake, and use Data Flow to transform data in Snowflake.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed